Nowadays, air traffic control (ATC) instructions are usually still given via voice communication to the pilots. But ATC systems, to be safe and efficient, need up-to-date data. Therefore, it requires lots of inputs from the air traffic controllers (ATCOs) to keep the system data correct. Mouse and keyboard are mostly used for this purpose generating high workload for the ATCO.

Automatic speech recognition converts speech to text and is, therefore, an alternative input modality. The projects AcListant® and AcListant®-Strips have shown that Assistant Based Speech Recognition (ABSR) can significantly reduce controllers’ workload and increase ATM efficiency (fuel savings of 50 to 65 litres per flight).

One main issue to transfer ABSR from the laboratory to the operational systems are the costs of deployment, because modern speech recognition models require manual adaptation to local requirements (local accents, phraseology deviations, environmental constraints etc.). AcListant® needed e.g. 1.3 Mio € for development and validation for Düsseldorf approach area. Currently, modern models of speech recognition require manual adaptation to a local environment.

The Horizon 2020 SESAR project MALORCA (Machine Learning of Speech Recognition Models for Controller Assistance) is partly funded by SESAR Joint Undertaking (Grant Number 698824). MALORCA proposes a general, cheap and effective solution to automate this re-learning, adaptation and customisation process by automatically learning local speech recognition and controllers models from radar and speech data recordings

The German Aerospace Center (DLR), Saarland University (USAAR), Idiap Research Institute (Idiap), Austro Control Österreichische Gesellschaft für Zivilluftfahrt mit beschränkter Haftung (ACG), and Air Navigation Services of the Czech Republic (ANS CR) work together to automatically and more efficiently improve speech recognition models for assistance at different controller working positions.

Description

MALORCA (Machine Learning of Speech Recognition Models for Controller Assistance) covers a 24 months period and started in April 2016 with a kick-off meeting at the Braunschweig site of DLR.

The projects AcListant® and AcListant®-Strips have demonstrated that controllers can be supported by Assistant Based Speech Recognition (ABSR) with command error rates below 2%. ABSR uses speech recognition embedded in a controller assistant system, which provides a dynamic minimized world model to the speech recognizer. The speech recognizer and the assistant system improve each other. The latter significantly reduces the search space of the first one, resulting in low command recognition error rates. However, transferring the developed ABSR prototype to different operational environments, i.e. different approach areas, can be very expensive, because the system requires adaptation to specific local needs such as speaker accents, special airspace characteristics, or local deviations from ICAO standard phraseology. The goal of this project is to solve these issues by a machine learning approach to provide a cheap, efficient and reliable process for a successful deployment of Assistance Based Speech Recognition (ABSR) systems for ATC tasks, from the laboratory to the real world.

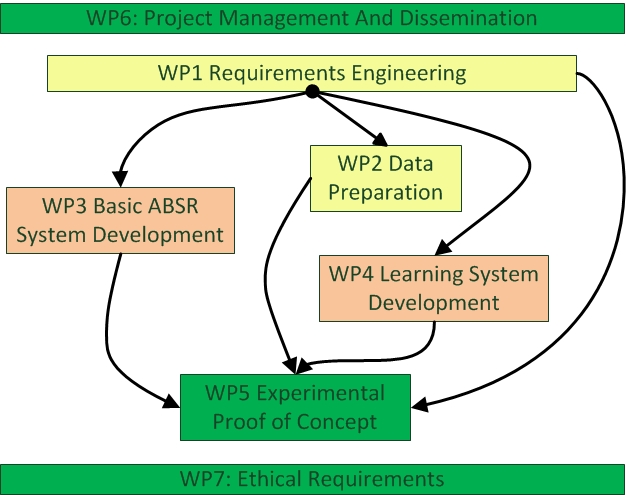

The project is divided into 5 main work packages (WP1-5) and the project management and dissemination in work package WP6 respectively the Ethical Requirements in WP7. The project starts in WP1 with analyzing the requirements with respect to the users’ needs and especially with respect to data availability, in order to deliver other WPs with clear, coherent, and well-established requirements that act as essential building blocks for all the defined modules. WP1 will also elaborate on the compliance with the ethical standards to guarantee protection of personal data.

WP2 will be responsible for delivering a first set of target-data (real speech recordings from air traffic controllers) for both selected approach areas (i.e. Prague and Vienna). In a first iteration, approximately 10 hours of real-time controller voice communication together with the associated metadata (contextual information from different sensors) will be provided. A fully manual pre-segmentation and transcription will be performed on that data.

In WP3 the specific knowledge suitable only for AcListant® end-users (airport of Dusseldorf) will be removed and the respective modules will be manually adapted to Vienna and Prague approach (test sites of this project). Afterwards the transcribed data from WP2, enriched with interviews provided by ANSP (ACG and ANS-CR), will be used to build an initial assistant based automatic speech recognizer (ABSR-0) for a generic approach area together with an integrated basic recognition model.

The ABSR-0 will represent the starting point of WP4. A significant amount of training data (approximately 100 hours of speech recordings) will be provided by the two ANSPs each together with the associated context information from other sensors. In contrast to WP2 the speech recordings will not be transcribed manually. WP4 will instead rely on methods of unsupervised learning and data mining to automatically generate an improved recognition model.

WP5 will be related to the experimental proof of concept. As controllers are end users of speech recognition, their feedback is essential. WP5 will, therefore, start with speech recordings (controller voice communication) together with the associated context for both approach areas. These data sets will not be used by the WP4 learning algorithms; instead they will serve as evaluation data. The controllers will be integrated into the feedback process by presenting their own speech recordings together with the radar data to them. The controller will listen to the communication and will evaluate ABSR performance. In parallel the speech recordings will also be manually transcribed, so that objective recognition accuracy will be available.

References

One promising approach to improve ASR performance is using context knowledge regarding expected utterances. This information may heavily reduce the search space and lead to fewer missed recognitions. Oualil et al. [1] analyzed the benefits of using context information for pre-processing versus using context for post-recognition.

Helmke et al. extend the usage of context by generating the context from an assistance system, i.e. an AMAN, to support ABSR [2]. In 2016, it was shown that ABSR significantly reduces controllers’ workload, which translates into fuel burn reduction and an increased runway throughput. These results were quantified in [3] resp. [4]. MALORCA project aims at automatically adapting the speech recognition building blocks to different approach areas. Learning of command prediction, i.e. the relevant part of the assistant system, was described in [5]. Automatic adaptation results for Vienna and Prague approach area from 22 hours of controller-pilot speech recordings and the corresponding radar tracks were presented in [6]. Command recognition error rates of the baseline system were reduced from 7.9% to below 0.6% for Prague and from 18.9% to 3.2% for Vienna. The buildings blocks and their adaptation to different approach areas were presented in [7]. Most recently, it was reported that no safety issues were observed. The controller detected all misrecognitions, when speech recognition fails [8].

The MALORCA project continues exploring Statistical Language Models (SLMs). Even though, SLMs have shown to perform better than Grammar-based models [9], the MALORCA project has raised a unique challenge combining both model types, as the initial amount of transcribed data is relatively small (< 4 hours). As this can lead to a poor coverage of ATM commands, MALORCA project alleviate this problem by leveraging the ICAO grammar and constructing a hybrid SLM from this grammar and already trained SLM.

The grammar specifies the set of rules, defining the correspondence of command words to ATM concepts. These classes can then be used to build a class-based SLM, which has shown an improved ASR performance. Intuitively, this class-based LM allows overcoming the problem with lack of data by mapping everything to a class space. In this class space, correlations can be learned at a concept level; unlike the regular SLMs used earlier [1]. These class-based LMs and regular SLMs are linearly interpolated [10] to produce the final hybrid SLM. Eventually, this hybrid LM is converted to a first-pass decoding finite state transducer and employed in the ABSR pipeline; see [11] for details of AM and LM training in MALORCA project.

The problem with a pure grammar-based approach is that controllers often deviate from ICAO standard phraseology. Unique rules for annotation are needed to enable exchange of data of different annotators or even between different approach areas. Such a solution cannot be provided by researchers only, because detailed and specific domain knowledge as well as the formation of a joint view on that problem is necessary. Only a broad industrial consortium is in the position to create an appropriate solution. In this case, the structure of SESAR with its exploratory and industrial research part plays an important role. As several partners of the MALORCA project are part of the exploratory as well as the industrial research part of SESAR, they are in the position to bridge the gap between research and industry by aligning the work between parts in SESAR and speed up in this way the deployment of new technologies in ATM. The SESAR 2020 funded solution 16-04 agreed on an ontology, i.e. unique rules, for command transcription and annotation [12]. The main elements of the ontology, agreed by 16-04 partners, are callsign and instruction. 16-04 partners include Air Navigation Service Providers (ANS CR, Avinor, Austro Control, DFS, LFV, NATS, Romatsa), Research Institutes (CRIDA, DLR), ATM supplier industry (Frequentis, Indra, and Thales) and Integra as ATM consultancy.

Recapitulating achieved recognition performance for Prague and Vienna approach from MALORCA and new statistics obtained from various error analysis processes are presented. Results are detailed for different types of ATC commands followed by rationales causing the performance drops. [13]

Speech recognitions results and ATCos feedback of using a Commercial-Off-The-Shelf (COTS) Speech Recognizer from Nuance in combination with the Command Prediction and Checker components of DLR. [14]

Based on the experience with an ATC approach hypotheses generator, a prototypic tower command hypotheses generator (TCHG) was developed to face current and future challenges in the aerodrome environment. [15]

© 2016 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating new collective works, for resale or redistribution to servers or lists, or reuse of any copyrighted component of this work in other works

© 2018 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating new collective works, for resale or redistribution to servers or lists, or reuse of any copyrighted component of this work in other works

© 2018 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating new collective works, for resale or redistribution to servers or lists, or reuse of any copyrighted component of this work in other works